From "Adventure" to "Efficiency Revolution"—KEYE Technology Reconstructs Industrial Productivity with AI Computing Power

Date:2025-11-13 Views:431

Computing power, or computing ability, is the core driving force of the information age. The size of computing power directly affects the speed and efficiency of data processing. However, computing power can only become a real productive force when it is integrated into industry.

Engine fuel, the "New Energy" of the digital economy.

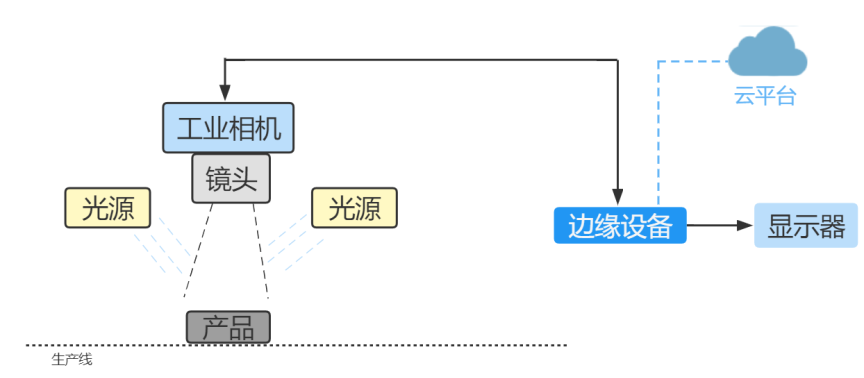

The key to transforming computing power into productivity lies in its deep integration with industry needs. Traditional manufacturing suffers from low production efficiency and high energy consumption, creating a demand for edge computing and intelligent computing. By deploying edge computing nodes on production lines, massive amounts of data collected by sensors can be processed in real time, and AI computing power can be used to optimize production processes, thus achieving intelligent manufacturing. The core driving force behind the upgrading and iteration of computing power technology comes precisely from these tangible industry pain points.

Evolution from "Centralized Cloud" to "Distributed Edge-Cloud"

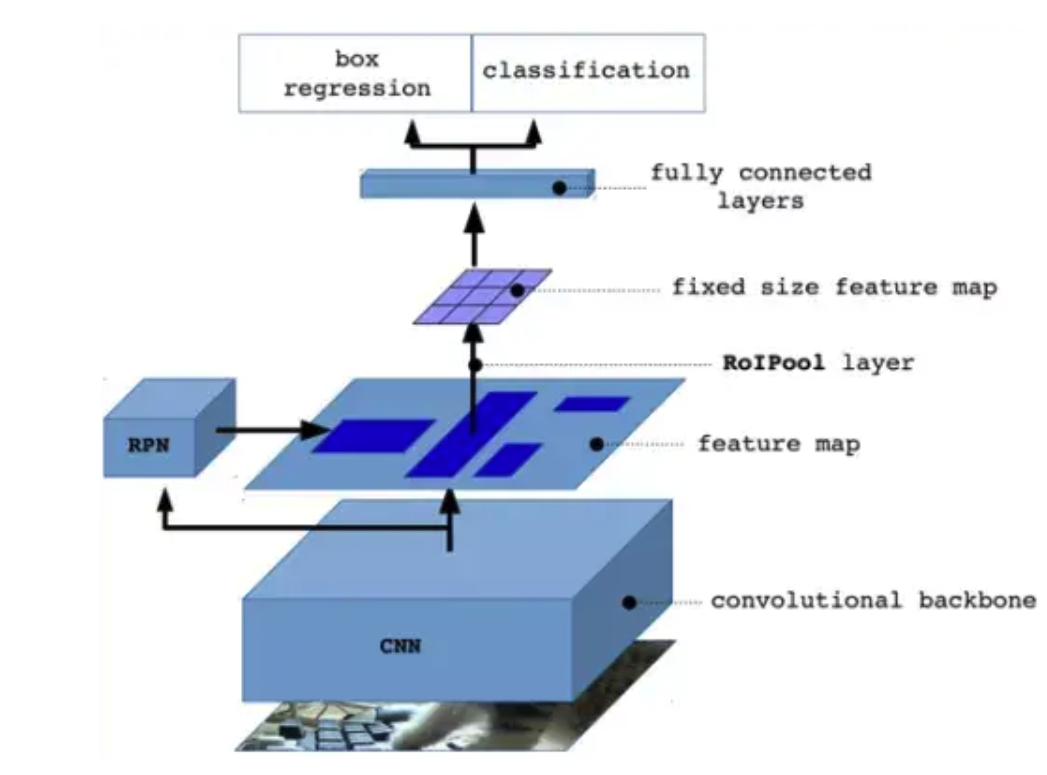

Driven by industry pain points, the in-depth development of AI visual inspection has evolved from a simple "model training tool" to a "powerful tool for the entire R&D process"—covering the entire lifecycle from data collection, annotation and cleaning, model training, deployment and inference, to monitoring and maintenance. However, traditional platforms heavily rely on cloud-based centralized architectures, which have gradually revealed two core contradictions in practical applications:

Explosive growth of data and cloud transmission bottlenecks: In industrial scenarios, defect data is increasing. If all of it is uploaded to the cloud, it will occupy more than 70% of the industrial bandwidth, leading to network congestion.V

Real-time decision-making requirements and cloud latency limitations: Industrial quality inspection requires millisecond-level response (such as defect detection in high-speed production lines), while cloud processing (including network transmission) typically has an average latency of over 100ms, which cannot meet real-time requirements.

At the heart of these contradictions lies the mismatch between centralized computing architecture and distributed business needs. The emergence of edge computing extends computing power from the cloud to the "edge" of the physical world, providing a new paradigm for resolving these contradictions.

KEYETECH-AI edge computing redefines the boundaries of AI computing power.

KEYETECH-AI edge computing is not simply "distributed computing," but rather it pushes data processing, storage, and AI inference capabilities down to physical devices or "edge nodes" closer to the data source, forming a collaborative "edge-cloud" architecture. Its core value is reflected in four aspects:

High Computing Power: KEYETECH's AI edge computing unit boasts a single-unit computing power of up to 32 TOPS, processing 400-500 image data points per second.

Low Latency: Local data processing avoids long-distance network transmission, reducing end-to-end latency from over 100ms in the cloud to less than 10ms (depending on the distance between the edge node and the device).

Bandwidth Optimization: After filtering, cleaning, and feature extraction of raw data, edge nodes upload only critical information, reducing data transmission volume by over 90%.

Higher Stability: Edge computing often faces network instability issues. KEYETECH-AI edge computing units possess a degree of autonomy, supporting offline AI inference and ensuring critical tasks can continue running even when the network is down.

uThe essence of edge-cloud collaboration: "three-tier collaboration" of data, models, and computing power.

KEYETECH-AI edge computing unit, developed by KEYETECH Technology, forms a collaborative architecture of end-edge-cloud, which is not a simple division of labor, but a deep integration of the three dimensions.

Data Collaboration: The edge is responsible for data collection, preprocessing, and feature extraction, while the cloud is responsible for data storage, labeling, and big data analysis, forming a closed loop of data flow: "edge filtering - cloud accumulation".

Model Collaboration: General-purpose large models are trained in the cloud, and lightweight models are deployed at the edge. Through technologies such as model compression, parameter updates, and federated learning, the model lifecycle management of "cloud optimization - edge inference" is realized.

Computing Power Collaboration: Dynamically allocate computing power between the edge and the cloud based on the real-time nature, complexity, and resource requirements of the task (e.g., prioritize the edge for real-time tasks and process non-real-time tasks in the cloud) to achieve optimal configuration of global computing power resources.

Compared with traditional centralized computing, the core advantages of edge computing are "low latency (millisecond-level response)," "bandwidth optimization (reducing invalid data uploads by more than 70%)" and "local decision-making (can still run independently when the network is down)," which perfectly match the core requirements of predictive maintenance for real-time performance and reliability.

Examples:

"Dozens of industrial cameras are activated simultaneously, capturing over 400 images per second. The microsecond-level data processing runs smoothly with the support of 608T computing power—this is no longer a scene from a science fiction movie, but a daily occurrence at Keye Technology in its Moutai bottle inspection project."

In the Wuliangye project, a single device can connect to more than 40 cameras, processing more than 400 images per second, with a redundancy of 30% computing power. In the future, as data increases, the entire system architecture can infinitely add computing power to match the AI inference pressure brought about by the increase in data.

Keye Technology's path of self-developed computing power is not only a technological breakthrough, but also a reconstruction of the essence of industrial productivity—solving the dilemma of generalization with specialized computing power, defining reliability standards with stability, and achieving green intelligent manufacturing with low power consumption.

Popular Science Knowledge – What is an Edge Computing Unit?

To make it easier to understand, we can think of one of the world's most amazing creatures—the octopus. As one of the most intelligent invertebrates, the octopus has a massive number of neurons, but 60% of them are distributed on its eight arms, while only 40% are in its brain. When escaping or hunting, it is exceptionally fast, and its eight arms move clearly and never get tangled or knotted. This is thanks to the octopus's distributed computing-like "multiple cerebellums + one brain".